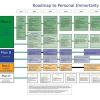

There are two views on the best strategy among transhumanists and rationalists: The first involves the belief that one must invest in life extension technologies, and the latter, that it is necessary to create an aligned AI that will solve all problems, including giving us immortality or even something better. In our article, we showed that these two points of view do not contradict each other, because it is the development of AI that will be the main driver of increased life expectancy in the coming years, and as a result, even currently living people can benefit (and contribute) from the future superintelligence in several ways.

Firstly, because the use of machine learning, narrow AI will allow the study of aging biomarkers and combinations of geroprotectors, and this will produce an increase in life expectancy of several years, which means that tens of millions of people will live long enough to survive until the date of the creation of the superintelligence (whenever it happens) and will be saved from death. In other words, the current application of narrow AI to life extension provides us with a chance to jump on the “longevity escape velocity”, and the rapid growth of the AI will be the main factor that will, like the wind, help to increase this velocity.

Secondly, we can—here in the present—utilize some possibilities of the future superintelligence, by collecting data for “digital immortality”. Based on these data, the future AI can reconstruct the exact model of our personality, and also solve the identity problem. At the same time, the collection of medical data about the body will help both now—as it can train machine learning systems in predicting diseases—and in the future, when it becomes part of digital immortality. By subscribing to cryonics, we can also tap into the power of the future superintelligence, since without it, a successful reading of information from the frozen brain is impossible.

Thirdly, there are some grounds for assuming that medical AI will be safer. It is clear that fooming can occur with any AI. But the development of medical AI will accelerate the development of BCI interfaces, such as a Neuralink, and this will increase the chance of AI not appearing separately from humans, but as a product of integration with a person. As a result, a human mind will remain part of the AI, and from within, the human will direct its goal function. Actually, this is also Elon Musk’s vision, and he wants to commercialize his Neuralink through the treatment of diseases. In addition, if we assume that the principle of orthogonality may have exceptions, then any medical AI aimed at curing humans will be more likely to have benevolence as its terminal goal.

As a result, by developing AI for life extension, we make AI more safe, and increase the number of people who will survive up to the creation of superintelligence. Thus, there is no contradiction between the two main approaches in improving human life via the use of new technologies.

Moreover, for a radical life extension with the help of AI, it is necessary to take concrete steps right now: to collect data for digital immortality, to join patient organizations in order to combat aging, and to participate in clinical trials involving combinations of geroprotectors, and computer analysis of biomarkers. We see our article as a motivational pitch that will encourage the reader to fight for a personal and global radical life extension.

In order to substantiate all of these conclusions, we conducted a huge analysis of existing start-ups and directions in the field of AI applications for life extension, and we have identified the beginnings of many of these trends, fixed in the specific business plans of companies.

Michael Batin, Alexey Turchin, Markov Sergey, Alice Zhila, David Denkenberger

“Artificial Intelligence in Life Extension: from Deep Learning to Superintelligence”

Informatica 41 (2017) 401–417: http://www.informati...ticle/view/1797